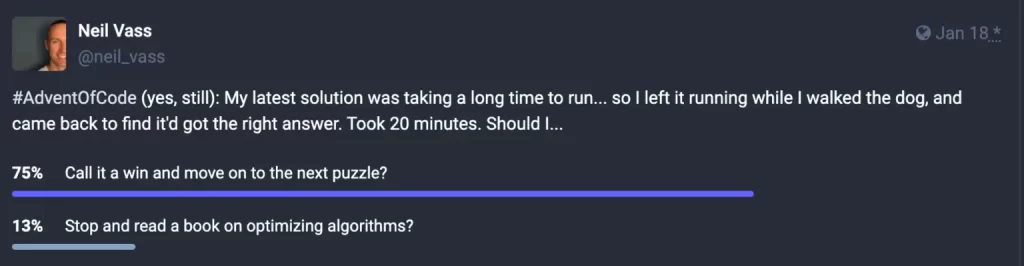

I’m a big fan of Advent of Code, it’s taught me about lots of topics. Maybe the most generally useful lesson I’ve taken from it followed the “should I stop and read a book” post I mentioned a few years ago:

There’s lots of puzzles where my solution looks like a reasonable approach, works just fine and gets the answer in a second or two for the examples and part 1, and then when I try the slightly larger part 2 … it takes 20 minutes, or an hour, or can run overnight and still be going the next day with no end in sight. The about page promises “every problem has a solution that completes in at most 15 seconds on ten-year-old hardware”, which makes me keen to find out what I’m missing.

This particular example was part 2 of 2022, day 23: Unstable Diffusion. When I get stuck, I really try to avoid looking up how others have solved the puzzle. Instead, I try to think about how to describe the type of problem it is and see what approaches exist. In other puzzles that’s led me to the shoelace formula, Karger’s min cut algorithm, and lots more.

Looking at this day 23 puzzle: it’s got elves standing on a grid, going through multiple rounds where they decide where to move based on what neighbouring elves do. That sounds similar to Conway’s Game of Life, a famous simulation with simple rules that lead to surprisingly complex outcomes. In Life, cells turn to alive or dead each round depending on how many neighbours they have. I bet people have written about calculating that quickly, and their ideas might help me think of optimisations for “elf or not elf” patterns in this puzzle’s grid.

This StackOverflow answer pointed me to Michael Abrash’s Graphics Programming Black Book. What a find!

Lessons from the 90s

Quake was an incredibly impressive PC game: 3D graphics that were way ahead of its time, an immersive soundtrack from Nine Inch Nails, and some amazing “working out loud” insight sharing on how it was programmed, most notably from Michael Abrash in magazine columns and conference talks. Abrash collected lots of his writing into a book, which is available online thanks to blogger James Gregory.

The book grabbed me right from the chapter 1 title: “The Best Optimizer Is between Your Ears”, and its acknowledgement of a then-current question: “Is performance still an issue in this era of cheap 486 computers and super-fast Pentium computers? You bet.”

Lots of the book is about particulars of mid-90s assembly instructions and processor behaviours — I skipped those chapters — with various other chapters that have timeless advice about not jumping into this kind of detail too early. The biggest opportunities come while the approach is still high-level, understandable, and open to creative rethinking.

Give your mind time and space to wander around the edges of important programming problems before you settle on any one approach. I titled this book’s first chapter “The Best Optimizer Is between Your Ears,” and that’s still true; what’s even more true is that the optimizer between your ears does its best work not at the implementation stage, but at the very beginning, when you try to imagine how what you want to do and what a computer is capable of doing can best be brought together.

— Chapter 10: Patient Coding, Faster Code

Lots of the detailed choices — tweaking in-memory representation, unrolling loops, and other choices that require impressive knowledge — can just be tinkering round the edges of a fundamentally slow approach, and can make you miss huge opportunities to rethink things. Looking at readers’ responses to “improve my code” challenges he set:

Three levels of optimization were evident in the word-counting entries I received in response to my challenge. I’d briefly describe them as “fine-tuning,” “new perspective,” and “table-driven state machine.”

— Chapter 16: There Ain’t No Such Thing as the Fastest Code

Some reader-submitted examples were brilliant — rethinking the whole approach and realising huge swathes of work would be unnecessary with a little reframing — while others were unbelievable. For a “make this run faster, with your solution as no more than 400 lines of C code” challenge, the winning submission used its 400 lines of C to generate 17,000 lines of assembly, plus a 64k lookup table, which all executed in a stunningly fast time. I think I’ll stick to the middle option (”new perspective”) for my own work.

Optimising Life

Chapter 17: The Game of Life was the most relevant for me — it looks at improving runtime for a game that’s very similar to the elf game I was working on, and specifically concentrates on the “reframe the problem” skill rather than detailed processor instruction tricks: “in this chapter, we’ll discuss some high-level language optimizations that can be applied by mere mortals within a reasonable period of time”.

It starts with a first-pass program for running Life, which runs far too slowly. Abrash decided from looking at it that he could guess where the time was getting spent and was keen to improve those parts. But he reminds us: “The first rule of optimization is: Only optimize where it matters. Use a profiler, or risk making a fool of yourself.”

I was wrong. Wrong, wrong, wrong. (But at least I was smart enough to use a profiler before actually writing any new code.) … As you can see, the time taken by

— Chapter 17: The Game of Lifedraw_pixel(),copy_cells(), and everything other than calculating the next generation is nothing more than noise. We could optimize these routines right down to executing instantaneously, and you know what? It wouldn’t make the slightest perceptible difference in how fast the program runs.

This is a lesson I’d learned already, from real-life work not Christmas puzzles — and was already using cProfile to inspect my slow Python code and see where the time went. Trying ideas had only got me from 20+ minutes down to 18.75 minutes, I needed a new perspective. (Note: when I moved from Python to TypeScript for Advent of Code, I never found a similarly useful tool for inspecting CPU-intensive programs. Any advice?)

# Runs in 18.75 minutes, can we improve?

# 22169768 function calls in 1125.186 seconds

# Ordered by: cumulative time

# ncalls tottime percall cumtime percall filename:lineno(function)

# 1 0.000 0.000 1125.186 1125.186 {built-in method builtins.exec}

# 1 0.000 0.000 1125.186 1125.186 <string>:1(<module>)

# 1 0.000 0.000 1125.186 1125.186 day23.py:119(play)

# 1 1.602 1.602 1125.183 1125.183 day23.py:53(play)

# 1003 1.305 0.001 1000.396 0.997 day23.py:58(<listcomp>)

# 2595764 5.451 0.000 999.091 0.000 day23.py:28(propose_move)

# 2595764 992.575 0.000 992.575 0.000 day23.py:41(<setcomp>)

# 2595764 123.019 0.000 123.019 0.000 {method 'count' of 'tuple' objects}

The rest of this chapter was a series of lightbulb moments for me, that helped me rethink what I was trying to achieve.

Some facts that were right in front of me, but I hadn’t considered:

- After a few dozen generations, most of the cellmap consists of cells in the off state.

- There are many possible cellmap representations other than one bit-per-pixel.

- Cells change state relatively infrequently.

Advice on re-examining the task:

Relax. Step back. Try to divine the true nature of the problem. The object is not to count neighbors and check cell states as quickly as possible; that’s just one possible implementation. The object is to determine when a cell’s state must be changed and to change it appropriately, and that’s what we need to do as quickly as possible.

— Chapter 17: The Game of Life

This idea — that we’ve never been asked to check all cells every round, that’s just an approach I picked and then focused on doing faster — ties very much in to some quotes I know about work in general.

- “There is nothing quite so useless, as doing with great efficiency, something that should not be done at all.” — Peter Drucker

- “Simplicity — the art of maximizing the amount of work not done — is essential” — Principles behind the Agile Manifesto

More advice, from when Abrash opened up the “improve Life” challenge to readers:

Most cells in a Life cellmap are dead, and in most cases all the neighbors are dead as well. This observation enabled me to get a major speed-up by scanning the cellmap for the few non-zero bytes (cells that were either alive or have neighbors that are alive). Although that was a big improvement, it still required my code to touch every cell to check its state. David has improved on this by maintaining a change list; that is, a list of pointers to cells that change in the current generation.

— Chapter 18: It’s a Plain Wonderful Life

These were the kind of pointers I needed to go from the day23.py I’d been working on, to a much-different day23-faster.py that does a little more on every visit to an elf cell — noting its neighbours and updating their “adjacent” lists — so that each round only visits the few, relevant cells that might be changing. This solves the puzzle in 12 seconds rather than 20+ minutes, enough of an improvement for me to happily call it done.

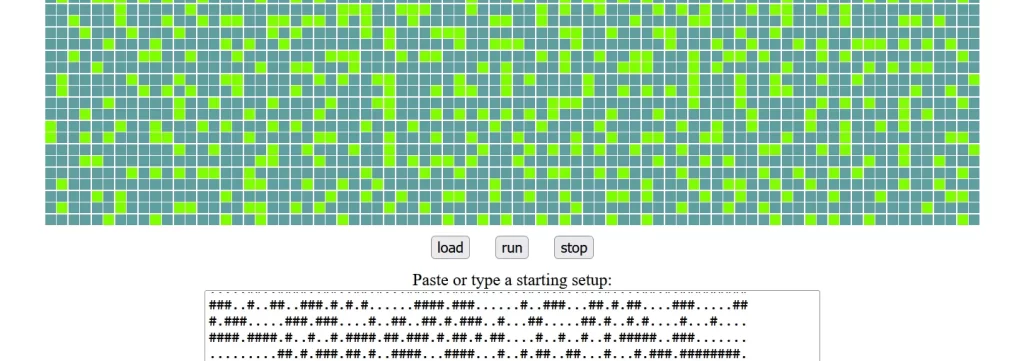

The Advent of Code version just wants some final stats, no visuals necessary. To explain more about how it works, and to practice the visual side as well, I’ve started an interactive demo at https://neil-vass-2.com/grid-games/. That site’s my “blog about blogging” where I’m very slowly making a new blogging stack. It’s all client-side CSS and JavaScript, you can see the files in GitHub. This page-in-progress will get better as I get more time and practice with it.

In the meantime, these optimisation ideas have helped me get better at all kinds of coding problems, so it’s far less common for me to need to go for a dog walk to see if that lets my code finish running. Sorry Archie.